반응형

|

마시호

MASHIHO |

|

|

본명

|

髙田 真史帆 (타카타 마시호, Mashiho Takata)

|

|

출생

|

2001년 3월 25일 (21세)

|

|

미에현

|

|

|

국적

|

일본

|

|

신체

|

169cm, 59kg, AB형, 275mm

|

|

가족

|

부모

|

|

학력

|

히노데고등학교 (졸업)

|

|

포지션

|

보컬, 댄서

|

|

데뷔

|

2020년 8월 7일 TREASURE

싱글 1집

THE FIRST STEP : CHAPTER ONE

(데뷔일로부터 +654일, 1주년) |

|

별명

|

기조링, 마쇼, 햄토리, 마토리, 진사범, 마시, 마모밍, 귀요미, 마요미

|

1. 개요

YG엔터테인먼트 소속 12인조 보이그룹 TREASURE의 멤버.

2. 데뷔 전

2.1. YG 보석함 참가

2015년 중학교 2학년 때부터 YG엔터테인먼트의 일본 지사에 소속된 연습생이었다.

2018년 서바이벌 프로그램 YG 보석함에 참가하여 최종탈락했지만 매그넘 멤버 추가합격으로 TREASURE의 멤버로 합류하게 되었다.

이후 트레저가 정식 데뷔하기 전까지 포함하여 약 6년 동안 연습생 생활을 했다.

2018년 서바이벌 프로그램 YG 보석함에 참가하여 최종탈락했지만 매그넘 멤버 추가합격으로 TREASURE의 멤버로 합류하게 되었다.

이후 트레저가 정식 데뷔하기 전까지 포함하여 약 6년 동안 연습생 생활을 했다.

3. 포지션

3.1. 보컬

예쁜 미성을 가지고 있으며, 음역대가 높은 편이다.

YG 보석함 당시 경연에서는 'Dean - D (Half Moon)' 도입부를 굉장히 잘 소화해낸 덕분에, 심사위원에게 극찬을 받고 경연에서 승리까지 할 수 있었다.

D3분 트레저 음악 작업편에서 부른 아사히와 마시호의 자작곡 '환상'은 마시호의 음역대에 잘 맞고 마시호가 매우 잘 소화해낸 탓에 팬들이 굉장히 좋아하는 편이다.

목소리도 좋아서 팬들은 풀버전 언제 공개 되나 오매불망 기다리는 중.

영어발음이 좋은 편이라 팝송도 곧잘 소화 해낸다.

역시 미성이 잘 어울리는 곡들을 자주 부르는 편.

트레저맵 EP28 / 9분 44초 부터 Tone Stith - Perfect Timing 보석함 인터뷰 + 퍼포먼스 비디오 / 31초 부터 Jason Derulo - Want to Want Me .

YG 보석함 당시 경연에서는 'Dean - D (Half Moon)' 도입부를 굉장히 잘 소화해낸 덕분에, 심사위원에게 극찬을 받고 경연에서 승리까지 할 수 있었다.

D3분 트레저 음악 작업편에서 부른 아사히와 마시호의 자작곡 '환상'은 마시호의 음역대에 잘 맞고 마시호가 매우 잘 소화해낸 탓에 팬들이 굉장히 좋아하는 편이다.

목소리도 좋아서 팬들은 풀버전 언제 공개 되나 오매불망 기다리는 중.

영어발음이 좋은 편이라 팝송도 곧잘 소화 해낸다.

역시 미성이 잘 어울리는 곡들을 자주 부르는 편.

트레저맵 EP28 / 9분 44초 부터 Tone Stith - Perfect Timing 보석함 인터뷰 + 퍼포먼스 비디오 / 31초 부터 Jason Derulo - Want to Want Me .

3.2. 댄스

5살 때부터 아크로바틱을 통해 춤을 배우기 시작했으며 그 때 부터 일주일동안 하루도 빠짐없이 학교가 마치면 댄스 수업을 들으러 갔다고 한다.

지금보다 더 바빴다고.

발레, 재즈, 비보이 등 매우 다양한 장르의 댄스를 배웠다고 한다.

트레저 티토크 / 1분 8초부터멤버 트위터에 댄스 영상을 가끔 올리는 편이고 어렸을 때 부터 춤을 배워서인지 팀 내에서도 춤 선생님 역할도 한다.

1주년 릴레이 브이앱에서 진행한 지목 프로필에 '새로운 안무를 배웠을 때 가장 빨리 외우는 멤버는'이라는 질문이 있었는데, 많은 멤버가 답변으로 마시호를 적었다.

데뷔 전 YG 보석함 파이널 경연 당시 댄스 포지션으로 무대에 참가해 <미쳐가네>+댄스포지션 관객투표 1위를 한 적이 있다.

YG 보석함 EP10 / 42분 6초부터아크로바틱도 굉장히 수준급이다.

트레저맵에서 세리머니로 덤블링을 자주 하는 편이고, 트레저맵 35화 웹드라마 오디션 편에서 특기로 비보잉을 선보였다.

음(MMM) 뮤직비디오 비하인드 영상에서도 프리즈를 보여준 적이 있고 또한 유희열의 스케치북에 출연했을 당시에도 덤블링을 보여준 적이 있다.

비보잉 기술 '프리즈'는 팀 내에서 유일하게 마시호와 지훈만이 할 수 있다고 한다.

트레저맵 EP35 / 10분 50초 부터 음(MMM) M/V 비하인드 / 2분 2초 부터 유희열의 스케치북 트레저편 / 3분 9초부터.

지금보다 더 바빴다고.

발레, 재즈, 비보이 등 매우 다양한 장르의 댄스를 배웠다고 한다.

트레저 티토크 / 1분 8초부터멤버 트위터에 댄스 영상을 가끔 올리는 편이고 어렸을 때 부터 춤을 배워서인지 팀 내에서도 춤 선생님 역할도 한다.

1주년 릴레이 브이앱에서 진행한 지목 프로필에 '새로운 안무를 배웠을 때 가장 빨리 외우는 멤버는'이라는 질문이 있었는데, 많은 멤버가 답변으로 마시호를 적었다.

데뷔 전 YG 보석함 파이널 경연 당시 댄스 포지션으로 무대에 참가해 <미쳐가네>+댄스포지션 관객투표 1위를 한 적이 있다.

YG 보석함 EP10 / 42분 6초부터아크로바틱도 굉장히 수준급이다.

트레저맵에서 세리머니로 덤블링을 자주 하는 편이고, 트레저맵 35화 웹드라마 오디션 편에서 특기로 비보잉을 선보였다.

음(MMM) 뮤직비디오 비하인드 영상에서도 프리즈를 보여준 적이 있고 또한 유희열의 스케치북에 출연했을 당시에도 덤블링을 보여준 적이 있다.

비보잉 기술 '프리즈'는 팀 내에서 유일하게 마시호와 지훈만이 할 수 있다고 한다.

트레저맵 EP35 / 10분 50초 부터 음(MMM) M/V 비하인드 / 2분 2초 부터 유희열의 스케치북 트레저편 / 3분 9초부터.

4. 캐릭터

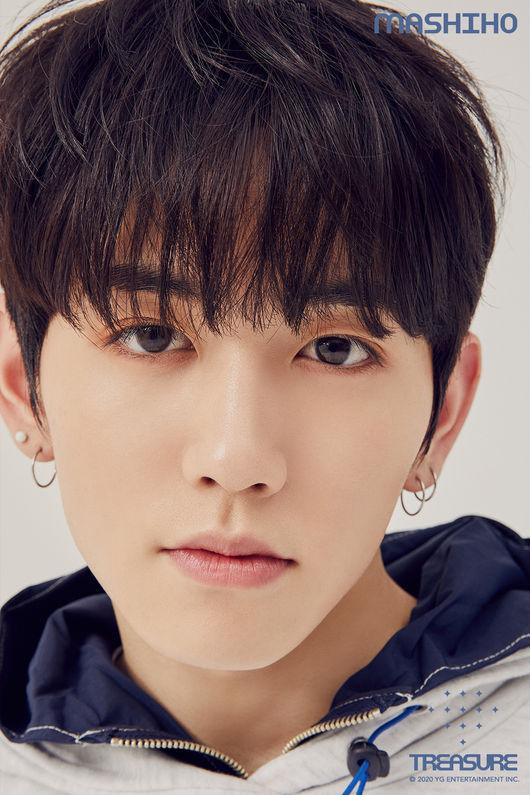

4.1. 비주얼

YG 보석함 때, 비주얼 1위로 뽑혔으며 쌍꺼풀이 있고 큰 사랑스러운 눈을 가지고 있다.

팬들에 따르면 눈이 청순하기 때문에 마스크를 쓰고 있어도 쉽게 알아볼 수 있다고 한다.

또 멤버들 중에서 가장 하얀 피부를 가지고 있기도 하다.

팬들에 따르면 눈이 청순하기 때문에 마스크를 쓰고 있어도 쉽게 알아볼 수 있다고 한다.

또 멤버들 중에서 가장 하얀 피부를 가지고 있기도 하다.

4.2. 성격

- 귀엽고 애교를 많이 부리는 스타일이다.

- 긍정적이며 하이텐션인 모습을 자주 볼 수 있는데 그런 모습이 스폰지밥의 성격과 매우 비슷하며 본인도 성격이 스폰지밥과 비슷한 것 같다며 웃으며 말했다. 트레저 PR 비디오 / 1분 14초 부터

- 귀엽고 애교를 많이 부리는 성격이긴 하지만 멘탈도 세고 매우 세심하다. 애교는 마시호의 한국어 말투 자체가 귀여운 편이라 자연스럽게 나오는 것 같고, 평소 성격을 보면 쿨한 성격에 더 가까운 편. 브이앱이나 트레저맵 등을 봤을 때 마시호가 멤버들을 챙겨주는 광경을 굉장히 많이 볼 수 있다.

- 다른 멤버들이 마시호 멘탈이 굉장히 강하다고 말할 때가 많다. 또한 팀 내에서 제일 스윗한 멤버로 마시호가 뽑힌 적도 있다. Seventeen 인터뷰 트레저편 / 3분 54초 부터

- MBTI는 ENFP이다.

5. 활동

5.1. 뮤직비디오

|

연도

|

아티스트

|

곡명

|

비고

|

|

2017년

|

AKMU

|

사춘기 : 겨울과 봄 사이

|

6. 여담

- 농구와 아크로바틱 등 운동을 좋아한다고 한다. 마시호의 과거 사진을 보면 농구부 활동을 하며 어릴 때부터 농구를 시작한 것으로 보인다.

- 청소와 정리를 좋아하고 자주 하는 편이다. 이유는 부모님도 깔끔하고 청소를 자주하시는 편인데 그 모습을 닮은 것이라고 말했다.

- 이름에 메시와 호날두가 모두 들어가서 운동을 잘한다고 한다.트레저맵 EP16 / 6분 5초부터

- 아주 조그만 향수를 들고 다닌다. 향기로운 남자~

- AKMU의 '사춘기 : 겨울과 봄 사이' 뮤직비디오에 바리스타 역으로 출연한 적이 있다.

- 멤버들이 아주 귀여워 한다. 트래저맵을 보면 지훈이 앙! 깨물어버리고 싶어 라고 한다거나 최현석이 우쭈쭈 하는 모습을 자주 볼 수 있다.

- 윤재혁, 박정우와 함께 왼손잡이 멤버들이다.

- 평소 더위를 많이 타서 추운 것을 좋아하고, 옷을 많이 입을 수 있는 겨울을 가장 좋아한다고 위버스에서 밝혔다.

- 한국에 마씨라는 성이 있고 시호라는 이름도 흔하다 보니 트레저를 잘 모르는 사람들은 마시호의 이름만 듣고 무심코 한국인으로 착각하기 쉽다.

- 체육 종목에서 다 뛰어난 것으로 보인다. 트레저 맵 53화에서 멤버들끼리 축구, 농구, 볼링 경기를 하였는데 다른 멤버들 이길정도로 잘하는 편이다.

- 2022년 1월 27일 같은 그룹 멤버인 최현석, 준규와 함께 코로나19 확진 판정을 받았다. 소속사에서는 입장문을 통해 이들 모두 코로나19 백신 접종을 마쳤으며 확진자를 비롯해 음성 판정을 받은 다른 멤버들 모두 별 증상없이 현재 건강 상태는 양호하다고 밝혔다. 2022년 2월 3일 코로나19 완치 소식을 알렸다.

- 멤버 요시와 같은 고등학교를 다녔다. 같은 YG엔터테인먼트 일본 지사의 연습생이었기 때문에 학교 안에서도 종종 만났다고 한다.

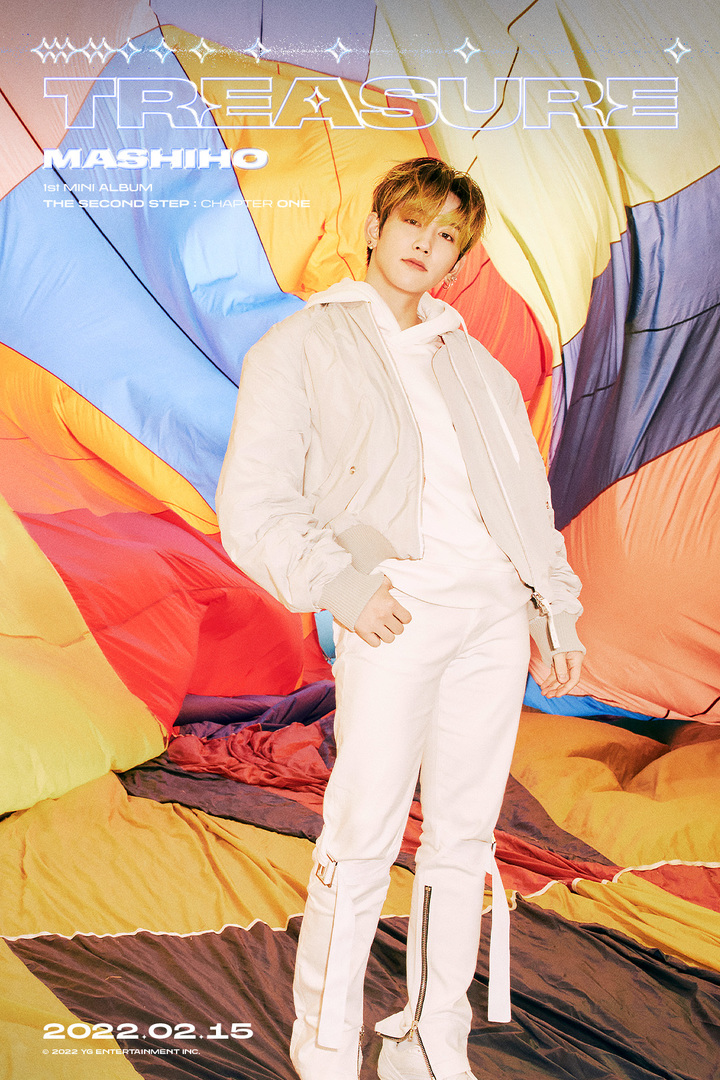

7. 역대 프로필 사진

| 마시호 THE FIRST ST... | THE FIRST STEP :... | 마시호 THE FIRST ST... | MY TREASURE 마시호 |

|

THE FIRST STEP : CHAPTER ONE

|

THE FIRST STEP : CHAPTER TWO

|

THE FIRST STEP : CHAPTER THREE

|

THE FIRST STEP : TREASURE EFFECT

|

|

THE SECOND STEP : CHAPTER ONE

|

반응형

'INFO' 카테고리의 다른 글

| 음바페 (잔류/주급/재계약/나이/마크롱/등번호/키/축구화/레알 이적) (0) | 2022.05.22 |

|---|---|

| 연정훈 (나이/재산/아빠/엄마/탈모/키/한가인 자녀/자녀/리즈) (0) | 2022.05.22 |

| 이도진 (나이/김준수/김숙/에어드랍/오케이/metaverse/노래/혈액형/복면가왕) (0) | 2022.05.22 |

| CLC(씨엘씨) (프로필/유진/해체/예은/승희/승연/CLC) (0) | 2022.05.21 |

| 김기현 (의원/수학/내각제/나이/원내대표/교수/작가/아이디어) (0) | 2022.05.21 |

댓글