반응형

|

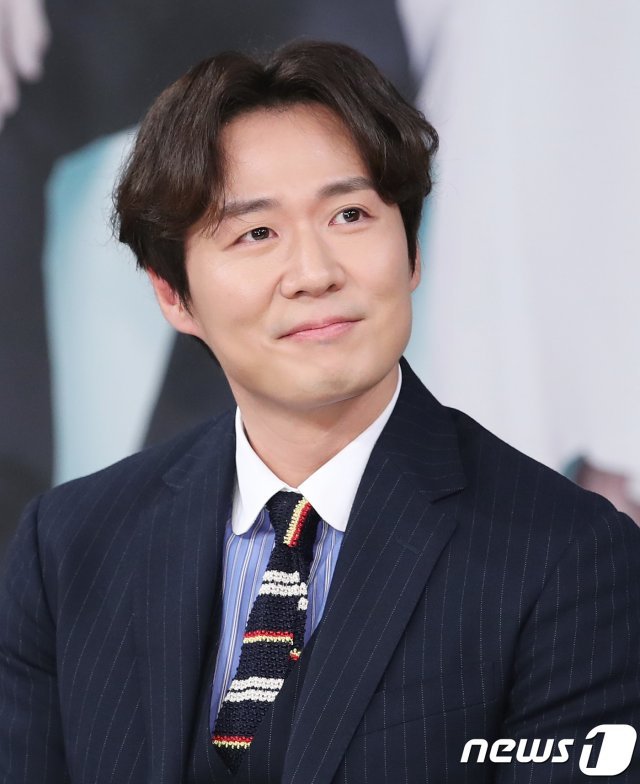

연정훈

延政勳 | Youn Jung-hoon |

|

|

출생

|

1978년 11월 6일 (43세)

|

|

서울특별시

|

|

|

국적

|

대한민국

|

|

본관

|

곡산 연씨 (谷山 延氏)

|

|

신체

|

179cm, 75kg, 270mm, O형

|

|

가족

|

아버지 연규진, 어머니 전명순, 누나 연정아

|

|

배우자 한가인(2005년 4월 26일 결혼 - 현재)

딸 연재희(2016년 4월 13일생) 아들 연재우(2019년 5월 13일생) |

|

|

학력

|

명지대학교 (산업디자인학 / 학사)

명지대학교 대학원 (제품디자인학 / 석사) |

|

종교

|

개신교

|

|

병역

|

대한민국 육군 제52보병사단 병장 만기전역

(2005년 11월 1일 ~ 2007년 10월 31일) |

|

데뷔

|

1999년 SBS 드라마 '파도'

데뷔일로부터 +8429일째 |

|

취미

|

드럼 연주, 카레이싱, 사진

|

|

특기

|

검도

|

|

MBTI

|

ENFJ

|

1. 개요

대한민국의 배우.

한가인의 남편.

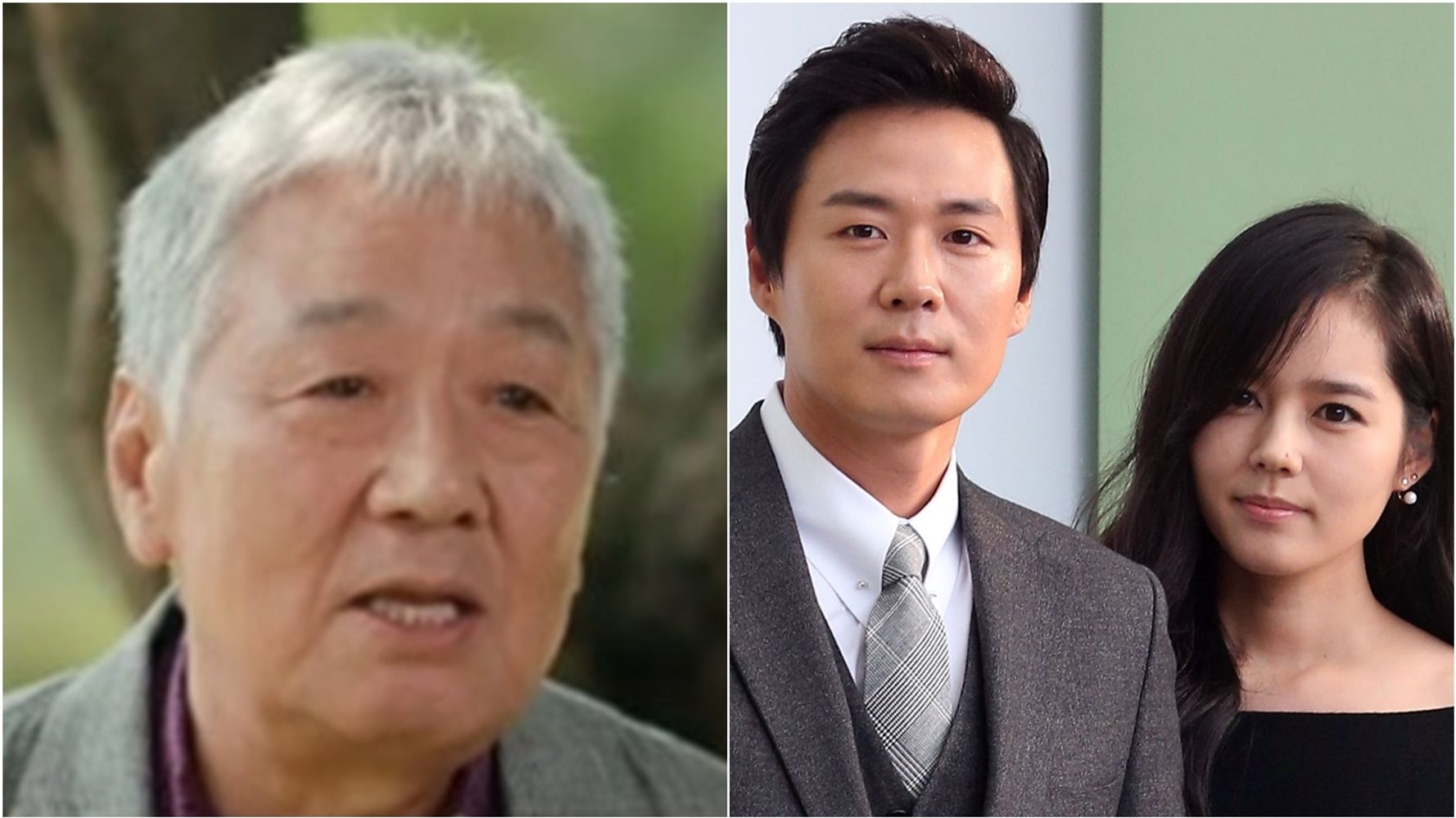

배우인 아버지 연규진을 이어 2대째 연기자를 하고 있다.

주요 작품으로는 슬픈 연가, 에덴의 동쪽, 뱀파이어 검사 등이 있다.

한가인의 남편.

배우인 아버지 연규진을 이어 2대째 연기자를 하고 있다.

주요 작품으로는 슬픈 연가, 에덴의 동쪽, 뱀파이어 검사 등이 있다.

2. 생애

한창 인기가 있던 한가인과 2005년에 결혼했다.

인터뷰에 따르면 아버지가 '결혼할 사람이라 생각하면 주저 말고 결혼해라.

'라고 해서 바로 결혼을 했다고 한다.

그 바닥의 섭리를 다 겪은 아버지나 본인을 봤을 때는 오히려 한가인을 지켜준 선택이라면서 기자들도 쉽게 건드리지는 않는다.

당시 한가인이 만 23살로 워낙 어렸던데다 도둑놈 소리를 하도 많이 들어서 연정훈이 매우 연로할 것이라고 생각하는 사람이 많다.

그러나 한가인의 4살 연상으로 나이가 그렇게 많이 차이나는 것도 아니며, 결혼할 때 연정훈이 만 27살이었는데 당시가 10여년 전임을 감안하면 연정훈도 일찍 결혼한 편에 속한다.

한가인과의 열애설이 이슈화된 것은 그 당시 연정훈이 드라마 슬픈 연가를 촬영하고 있을 때 갑작스럽게 결혼 발표 기자회견을 하면서다.

그 전에도 몇 달 간 공개연애 상태여서 토크쇼에서 연애담을 이야기한 적이 있다.

결혼 발표 기자회견 전에도 결혼설이 나돌았었다.

2003년 KBS 연기대상 신인상 수상 후 수상 소감 말미에 들릴 듯 말 듯 한 소리로 "현주야 사랑해"라고 말한 것이 솔로들의 가슴에 대못을 박았다.

그날 한가인도 같은 작품으로 여자 신인상을 수상했는데, 정작 한가인은 그 당시에는 못들었다고 한다.

결혼을 한 후에 현역으로 입대한 그는 상근예비역으로 제52보병사단에서 PX병으로 복무했다.

기혼자도 자녀가 없는 경우에는 웬만하면 일반병으로 복무하는 상황에서 이것 또한 천운으로 보는 시각이 많다.

자대에 출퇴근을 할 때 거의 매일 한가인이 찾아왔다고 한다.

오죽하면 연대장이 불러서 병사들의 사기에 영향을 미친다고 그만 찾아오라고 말했을 정도였다고 한다.

근데 막상 찾아오기를 기대하고 있겠지.

우린 이미 알고 있어.

2011년 4월 20일 한가인이 MBC FM4U의 푸른 밤 정엽입니다에 출연해서 '손에 물 마를 날이 없다'고 밝혀서 그동안 이유 없이 욕을 먹다가 드디어 이유 있게 욕을 먹는다.

과거에 매년 2세 계획이 기사가 솔솔 나오고 있었는데, 정작 내용을 보면 내년에 보겠다는 식으로 얼렁뚱땅 넘기고는 한다.

그리고 댓글은 물론 어마어마한 섹드립과 쌍욕의 향연.

자녀를 가지지 않는 연예인 부부들에게 나타나는 루머 (어느 한 쪽의 불임설이나 불화설) 역시 있다.

사실상 연정훈은 대표작이 케이블 드라마인 뱀파이어 검사이고, 한가인도 히트작들이 해를 품은 달, 건축학개론.

주부생활 스타일러 5월호에 한가인이 드디어 결혼 9년 만에 임신했다는 내용이 실렸고, 2014년 4월 22일자로 인터넷 뉴스마다 임신 7주로 기사가 떴다.

세월호 사건 때문에 온 나라 분위기가 가라앉아 있는 상황이라 소속사에도 이 사실을 알리지 않고 있었다.

그러나 2014년 5월에 유산했다.

관련 기사2016년 4월 13일 결혼 11년 만에 드디어 득녀를 하게 된다.

딸의 태명은 디즈니 3D 애니메이션 주인공에서 따온 볼트였다고 한다.

그리고 최근 들어 새로운 소스가 발굴되었는데 다름 아니라 좌가인 우태희를 이루어낸 대한민국 남자들의 최고의 적이란 사실이다.

아버지인 연규진이 며느리인 한가인이 시키는 대로 하다시피하며 살고 있다.

대인배인지 빵셔틀인지 모를 지경이다.

하지만 연규진도 사람인지라 방송에서 결국 이 사실을 실토했다.

한편, 연기력에 다소 논란이 있던 아내와 달리 연정훈은 맡은 역에 관한 한 큰 논란이나 비난은 등장치 않고 있다.

지난 2010년, SBS에서 방영한 드라마 제중원에서는 사대부 가문 출신의 의사였던 도양을 연기해 호평을 받기도 하였다.

2015년 2월 15일부터 2월 22일까지 런닝맨에 신년 요리대전에서 유재석의 파트너로서 출연했다.

위의 사진보다 나이 들어 보이고 살도 살짝 찐 모습이다.

감자 손질이 무척 서툰 걸 보고 유재석의 핀잔을 들었다.

여담으로, 두 미녀 배우가 옆에 있는데도 연정훈은 먼산을 바라보는 듯한 뚱한 표정으로 사진이 찍혔는데, 해당 사진을 두고 "훗, 난 어차피 집에 가면 한가인이 기다린다.

"라는 농담으로 쓰인다.

인터뷰에 따르면 아버지가 '결혼할 사람이라 생각하면 주저 말고 결혼해라.

'라고 해서 바로 결혼을 했다고 한다.

그 바닥의 섭리를 다 겪은 아버지나 본인을 봤을 때는 오히려 한가인을 지켜준 선택이라면서 기자들도 쉽게 건드리지는 않는다.

당시 한가인이 만 23살로 워낙 어렸던데다 도둑놈 소리를 하도 많이 들어서 연정훈이 매우 연로할 것이라고 생각하는 사람이 많다.

그러나 한가인의 4살 연상으로 나이가 그렇게 많이 차이나는 것도 아니며, 결혼할 때 연정훈이 만 27살이었는데 당시가 10여년 전임을 감안하면 연정훈도 일찍 결혼한 편에 속한다.

한가인과의 열애설이 이슈화된 것은 그 당시 연정훈이 드라마 슬픈 연가를 촬영하고 있을 때 갑작스럽게 결혼 발표 기자회견을 하면서다.

그 전에도 몇 달 간 공개연애 상태여서 토크쇼에서 연애담을 이야기한 적이 있다.

결혼 발표 기자회견 전에도 결혼설이 나돌았었다.

2003년 KBS 연기대상 신인상 수상 후 수상 소감 말미에 들릴 듯 말 듯 한 소리로 "현주야 사랑해"라고 말한 것이 솔로들의 가슴에 대못을 박았다.

그날 한가인도 같은 작품으로 여자 신인상을 수상했는데, 정작 한가인은 그 당시에는 못들었다고 한다.

결혼을 한 후에 현역으로 입대한 그는 상근예비역으로 제52보병사단에서 PX병으로 복무했다.

기혼자도 자녀가 없는 경우에는 웬만하면 일반병으로 복무하는 상황에서 이것 또한 천운으로 보는 시각이 많다.

자대에 출퇴근을 할 때 거의 매일 한가인이 찾아왔다고 한다.

오죽하면 연대장이 불러서 병사들의 사기에 영향을 미친다고 그만 찾아오라고 말했을 정도였다고 한다.

근데 막상 찾아오기를 기대하고 있겠지.

우린 이미 알고 있어.

2011년 4월 20일 한가인이 MBC FM4U의 푸른 밤 정엽입니다에 출연해서 '손에 물 마를 날이 없다'고 밝혀서 그동안 이유 없이 욕을 먹다가 드디어 이유 있게 욕을 먹는다.

과거에 매년 2세 계획이 기사가 솔솔 나오고 있었는데, 정작 내용을 보면 내년에 보겠다는 식으로 얼렁뚱땅 넘기고는 한다.

그리고 댓글은 물론 어마어마한 섹드립과 쌍욕의 향연.

자녀를 가지지 않는 연예인 부부들에게 나타나는 루머 (어느 한 쪽의 불임설이나 불화설) 역시 있다.

사실상 연정훈은 대표작이 케이블 드라마인 뱀파이어 검사이고, 한가인도 히트작들이 해를 품은 달, 건축학개론.

주부생활 스타일러 5월호에 한가인이 드디어 결혼 9년 만에 임신했다는 내용이 실렸고, 2014년 4월 22일자로 인터넷 뉴스마다 임신 7주로 기사가 떴다.

세월호 사건 때문에 온 나라 분위기가 가라앉아 있는 상황이라 소속사에도 이 사실을 알리지 않고 있었다.

그러나 2014년 5월에 유산했다.

관련 기사2016년 4월 13일 결혼 11년 만에 드디어 득녀를 하게 된다.

딸의 태명은 디즈니 3D 애니메이션 주인공에서 따온 볼트였다고 한다.

그리고 최근 들어 새로운 소스가 발굴되었는데 다름 아니라 좌가인 우태희를 이루어낸 대한민국 남자들의 최고의 적이란 사실이다.

아버지인 연규진이 며느리인 한가인이 시키는 대로 하다시피하며 살고 있다.

대인배인지 빵셔틀인지 모를 지경이다.

하지만 연규진도 사람인지라 방송에서 결국 이 사실을 실토했다.

한편, 연기력에 다소 논란이 있던 아내와 달리 연정훈은 맡은 역에 관한 한 큰 논란이나 비난은 등장치 않고 있다.

지난 2010년, SBS에서 방영한 드라마 제중원에서는 사대부 가문 출신의 의사였던 도양을 연기해 호평을 받기도 하였다.

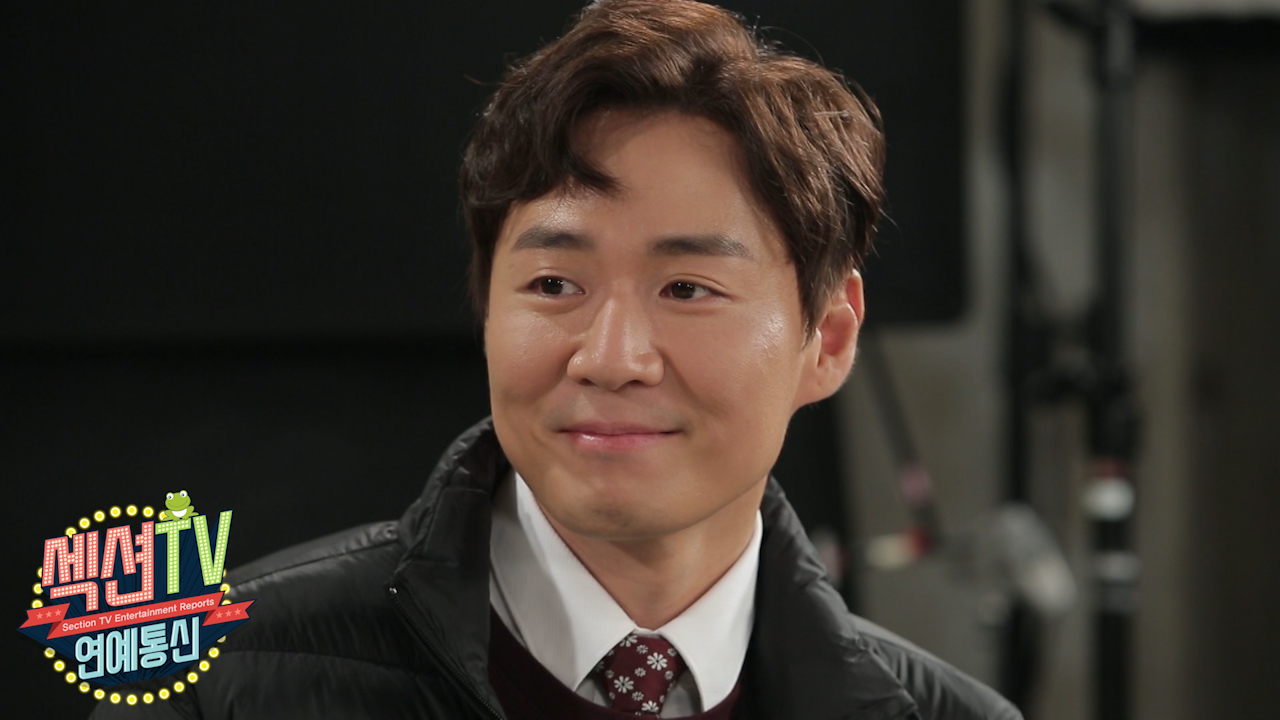

2015년 2월 15일부터 2월 22일까지 런닝맨에 신년 요리대전에서 유재석의 파트너로서 출연했다.

위의 사진보다 나이 들어 보이고 살도 살짝 찐 모습이다.

감자 손질이 무척 서툰 걸 보고 유재석의 핀잔을 들었다.

여담으로, 두 미녀 배우가 옆에 있는데도 연정훈은 먼산을 바라보는 듯한 뚱한 표정으로 사진이 찍혔는데, 해당 사진을 두고 "훗, 난 어차피 집에 가면 한가인이 기다린다.

"라는 농담으로 쓰인다.

3. 개인 취미

상당한 차덕후로 슈퍼카 수집에 취미가 있다.

그 중에서 일부로는 람보르기니 무르시엘라고, 벤틀리 플라잉스퍼, 포르쉐 카레라 GT, 페라리 F40, 페라리 360, 페라리 488 GTB, 벤츠 SL65 AMG 등이 그를 거쳐 간 것으로 알려져 있다.

여담으로 도민저축은행 채 회장의 창고를 압수 수색하던 중 도난된 포르쉐가 1대 발견됐는데, 이게 연정훈의 차량이었다고 한다.

관련 기사 군 복무를 하던 시절에는 케이블채널의 연예 프로그램에 그가 플라잉스퍼를 타고 출근하는 장면이 나갔다.

2016년 5월에는 신형 볼보 XC90을 타는 모습이 포착됐다.

링크 실제로 그런 열정 덕분에 2010년 슈퍼레이스의 최고 클래스인 슈퍼6000클래스에 시케인 레이싱팀 소속으로 출전하면서 정식으로 모터스포츠에 데뷔했고,이후 한동안 국제 레이스인 페라리 원메이크 챌린지 레이스에도 출전했다가 2017년 다시 인디고 레이싱 팀 소속으로 슈퍼레이스의 GT클래스에 출전했다.

이런 이력 덕분이었는지 2011년 8월 20일부터 방영한 XTM의 탑기어 코리아에 출연하게 되었다.

김진표와 함께 괜찮은 수준으로 탑기어 코리아를 진행해서 팬들에게 긍정적인 반응을 다수 얻기도 했는데, 2013년 4월 28일에 방영한 탑기어 코리아 시즌 4부터는 개인 일정 문제로 하차하게 되었다.

카메라와 사진에도 관심이 있는 듯하다.

고성능 카메라를 이용해 사진을 찍는 모습을 자주 보이기도 한다.

2013년 11월에 주한 스웨덴 대사관과 카메라 업체인 핫셀블라드 코리아가 진행한 '이노베이티브 스웨덴' 사진 공모전에서 대상을 수상하기도 했다.

그런데 문제는 그 사진의 모델이 공모전 1등 상품이 스웨덴 왕복 항공권과 스웨덴 5박 숙박권인데, 이는 한가인 모델료보다 훨씬 저렴하다고 할 수 있다.

공모전에 참여한 사진은 핫셀브라드 측이 홍보에 사용할 수 있으므로 1등 상품권과 한가인 모델료를 교환한 핫셀블라드가 더 이득을 본 셈이다.

2019년 11월 10일 슈퍼맨이 돌아왔다 6주년 기념 달력 촬영 특집에 사진사로 특별출연하기도 했고 1박 2일에서는 고급 카메라를 항상 챙겨가서 사진 촬영도 자주 한다.

시계 쪽으로도 한 시덕 해서 탑기어 코리아에서는 Patek Philippe 노틸러스를 자주 차고 나왔으며, 한지혜와 공연한 드라마 금 나와라 뚝딱에서는 폴 쥬른 F.

P.

Journe을 차고 나왔다.

국내에 정식 수입된 바 없고, 해외가 4만 5천불 가량의 고가 시계다.

2014년 페라리 챌린지 카레이싱 선수로 출전한다.

그 중에서 일부로는 람보르기니 무르시엘라고, 벤틀리 플라잉스퍼, 포르쉐 카레라 GT, 페라리 F40, 페라리 360, 페라리 488 GTB, 벤츠 SL65 AMG 등이 그를 거쳐 간 것으로 알려져 있다.

여담으로 도민저축은행 채 회장의 창고를 압수 수색하던 중 도난된 포르쉐가 1대 발견됐는데, 이게 연정훈의 차량이었다고 한다.

관련 기사 군 복무를 하던 시절에는 케이블채널의 연예 프로그램에 그가 플라잉스퍼를 타고 출근하는 장면이 나갔다.

2016년 5월에는 신형 볼보 XC90을 타는 모습이 포착됐다.

링크 실제로 그런 열정 덕분에 2010년 슈퍼레이스의 최고 클래스인 슈퍼6000클래스에 시케인 레이싱팀 소속으로 출전하면서 정식으로 모터스포츠에 데뷔했고,이후 한동안 국제 레이스인 페라리 원메이크 챌린지 레이스에도 출전했다가 2017년 다시 인디고 레이싱 팀 소속으로 슈퍼레이스의 GT클래스에 출전했다.

이런 이력 덕분이었는지 2011년 8월 20일부터 방영한 XTM의 탑기어 코리아에 출연하게 되었다.

김진표와 함께 괜찮은 수준으로 탑기어 코리아를 진행해서 팬들에게 긍정적인 반응을 다수 얻기도 했는데, 2013년 4월 28일에 방영한 탑기어 코리아 시즌 4부터는 개인 일정 문제로 하차하게 되었다.

카메라와 사진에도 관심이 있는 듯하다.

고성능 카메라를 이용해 사진을 찍는 모습을 자주 보이기도 한다.

2013년 11월에 주한 스웨덴 대사관과 카메라 업체인 핫셀블라드 코리아가 진행한 '이노베이티브 스웨덴' 사진 공모전에서 대상을 수상하기도 했다.

그런데 문제는 그 사진의 모델이 공모전 1등 상품이 스웨덴 왕복 항공권과 스웨덴 5박 숙박권인데, 이는 한가인 모델료보다 훨씬 저렴하다고 할 수 있다.

공모전에 참여한 사진은 핫셀브라드 측이 홍보에 사용할 수 있으므로 1등 상품권과 한가인 모델료를 교환한 핫셀블라드가 더 이득을 본 셈이다.

2019년 11월 10일 슈퍼맨이 돌아왔다 6주년 기념 달력 촬영 특집에 사진사로 특별출연하기도 했고 1박 2일에서는 고급 카메라를 항상 챙겨가서 사진 촬영도 자주 한다.

시계 쪽으로도 한 시덕 해서 탑기어 코리아에서는 Patek Philippe 노틸러스를 자주 차고 나왔으며, 한지혜와 공연한 드라마 금 나와라 뚝딱에서는 폴 쥬른 F.

P.

Journe을 차고 나왔다.

국내에 정식 수입된 바 없고, 해외가 4만 5천불 가량의 고가 시계다.

2014년 페라리 챌린지 카레이싱 선수로 출전한다.

4. 출연 작품

4.1. 영화

|

연도

|

제목

|

배역

|

비고

|

|

2001년

|

조폭 마누라

|

효민

|

단역

|

|

2005년

|

키다리 아저씨

|

김준호

|

주연

|

|

연애술사

|

우지훈

|

||

|

2013년

|

좋은 친구들

|

K

|

주연

|

|

2016년

|

스킵트레이스: 합동수사

|

존 잘 윌리

|

미국·중국 합작 영화 • 조연

|

4.2. 드라마

|

드라마

|

||||

|

연도

|

방송사

|

제목

|

배역

|

비고

|

|

1999년 ~ 2000년

|

SBS |

파도

|

나윤호

|

데뷔작

|

|

1999년

|

LA 아리랑

|

|||

|

카이스트

|

양병석

|

|||

|

2001년

|

MBC |

뉴 논스톱

|

준수

|

|

|

2002년

|

KBS 2TV |

드라마시티 - 아름다운 청춘

|

신영재

|

|

|

이색극장 두 남자 이야기

|

길소월

|

|||

|

2003년

|

드라마시티 - 사로잡히다

|

교도관

|

||

| KBS 1TV |

노란 손수건

|

윤태영

|

조연

|

|

|

2003년 ~ 2004년

|

SBS |

흥부네 박 터졌네

|

장현태

|

주연

|

|

2003년

|

KBS 2TV |

로즈마리

|

장준오

|

|

|

2004년

|

백설공주

|

한진우

|

||

| MBC |

사랑을 할꺼야

|

연하늘

|

||

|

2005년

|

슬픈 연가

|

이건우

|

||

|

2008년 ~ 2009년

|

에덴의 동쪽

|

이동욱

|

||

|

2009년

|

SBS |

드림

|

강기창

|

1회

특별출연 |

|

2010년

|

제중원

|

백도양

|

주연

|

|

|

2011년

|

OCN |

뱀파이어 검사

|

민태연

|

|

|

2012년

|

MBN |

사랑도 돈이 되나요

|

마인탁

|

주연

|

| OCN |

뱀파이어 검사 시즌2

|

민태연

|

||

|

2013년

|

MBC |

금 나와라, 뚝딱!

|

박현수

|

|

|

2014년

|

TVB |

사랑의 순간 (爱情來的時候)

|

김동성

|

중국 드라마 • 주연

|

|

2015년

|

SBS |

가면

|

민석훈

|

주연

|

| OCN |

처용 시즌2

|

김수겸

|

5회

특별출연 |

|

|

2016년

|

JTBC |

욱씨남정기

|

이지상

|

9회 ~ 16회

특별출연 |

|

2017년

|

맨투맨

|

모승재

|

주연

|

|

|

2017년 ~ 2018년

|

SBS |

브라보 마이 라이프

|

신동우

|

|

|

2018년 ~ 2019년

|

MBC |

내사랑 치유기

|

최진유

|

|

|

2019년

|

OCN |

빙의

|

오수혁

|

|

|

2020년

|

채널A |

거짓말의 거짓말

|

강지민

|

|

|

연도

|

방송사

|

제목

|

배역

|

비고

|

|

1999년 ~ 2000년

|

SBS |

파도

|

나윤호

|

데뷔작

|

|

1999년

|

LA 아리랑

|

|||

|

카이스트

|

양병석

|

|||

|

2001년

|

MBC |

뉴 논스톱

|

준수

|

|

|

2002년

|

KBS 2TV |

드라마시티 - 아름다운 청춘

|

신영재

|

|

|

이색극장 두 남자 이야기

|

길소월

|

|||

|

2003년

|

드라마시티 - 사로잡히다

|

교도관

|

||

| KBS 1TV |

노란 손수건

|

윤태영

|

조연

|

|

|

2003년 ~ 2004년

|

SBS |

흥부네 박 터졌네

|

장현태

|

주연

|

|

2003년

|

KBS 2TV |

로즈마리

|

장준오

|

|

|

2004년

|

백설공주

|

한진우

|

||

| MBC |

사랑을 할꺼야

|

연하늘

|

||

|

2005년

|

슬픈 연가

|

이건우

|

||

|

2008년 ~ 2009년

|

에덴의 동쪽

|

이동욱

|

||

|

2009년

|

SBS |

드림

|

강기창

|

1회

특별출연 |

|

2010년

|

제중원

|

백도양

|

주연

|

|

|

2011년

|

OCN |

뱀파이어 검사

|

민태연

|

|

|

2012년

|

MBN |

사랑도 돈이 되나요

|

마인탁

|

주연

|

| OCN |

뱀파이어 검사 시즌2

|

민태연

|

||

|

2013년

|

MBC |

금 나와라, 뚝딱!

|

박현수

|

|

|

2014년

|

TVB |

사랑의 순간 (爱情來的時候)

|

김동성

|

중국 드라마 • 주연

|

|

2015년

|

SBS |

가면

|

민석훈

|

주연

|

| OCN |

처용 시즌2

|

김수겸

|

5회

특별출연 |

|

|

2016년

|

JTBC |

욱씨남정기

|

이지상

|

9회 ~ 16회

특별출연 |

|

2017년

|

맨투맨

|

모승재

|

주연

|

|

|

2017년 ~ 2018년

|

SBS |

브라보 마이 라이프

|

신동우

|

|

|

2018년 ~ 2019년

|

MBC |

내사랑 치유기

|

최진유

|

|

|

2019년

|

OCN |

빙의

|

오수혁

|

|

|

2020년

|

채널A |

거짓말의 거짓말

|

강지민

|

5. 그 외 활동

5.1. 방송

|

날짜

|

방송사

|

방송명

|

역할

|

비고

|

|

2003년

|

||||

|

5월 1일

|

KBS 1TV |

아침마당

|

게스트

|

영상

|

|

6월 26일

|

KBS 2TV |

행복채널

|

영 상

|

|

|

2004년

|

||||

|

5월 13일

|

KBS 2TV |

해피투게더 시즌1

|

게스트

|

131회-1

131회-2 |

|

9월 6일

|

SBS |

야심만만

|

76회

|

|

|

2005년

|

||||

|

5월 9일 ~ 5월 16일

|

SBS |

야심만만

|

게스트

|

111회

112회 |

|

1월 18일

|

좋은 아침

|

VCR 출연

|

2036회

|

|

|

4월 27일

|

2104회

|

|||

|

2008년

|

||||

|

8월 25일

|

MBC |

에덴의 동쪽 스페셜

|

VCR 출연

|

|

|

2010년

|

||||

|

1월 1일

|

SBS |

절친노트 시즌3

|

게스트

|

56회

|

|

1월 2일

|

김정은의 초콜릿

|

85회

|

||

|

2011년

|

||||

|

8월 20일 ~ 12월 3일

|

XTM |

탑기어 코리아 시즌1

|

진행

|

|

|

10월 20일

|

KBS 2TV |

해피투게더 시즌3

|

게스트

|

219회

|

|

2012년

|

||||

|

4월 8일 ~ 6월 17일

|

XTM |

탑기어 코리아 시즌2

|

진행

|

|

|

10월 7일 ~ 12월 16일

|

탑기어 코리아 시즌3

|

|||

|

2014년

|

||||

|

10월 19일 ~ 10월 26일

|

KBS 2TV |

로드 킹

|

출연

|

2부작

|

|

2015년

|

||||

|

2월 15일 ~ 2월 22일

|

SBS |

런닝맨

|

게스트

|

234회

235회 |

|

2017년

|

||||

|

5월 31일

|

JTBC |

한끼줍쇼

|

게스트

|

33회

|

|

7월 9일 ~ 7월 16일

|

SBS |

미운 우리 새끼

|

스페셜 MC

|

44회 ~ 45회

|

|

8월 3일

|

tvN |

인생술집

|

게스트

|

30회

|

|

2019년

|

||||

|

11월 10일

|

KBS 2TV |

슈퍼맨이 돌아왔다

|

특별출연

|

303회

|

|

12월 8일 ~

|

1박 2일 시즌4

|

고정 출연

|

||

5.2. 라디오

|

날짜

|

방송사

|

방송명

|

역할

|

비고

|

|

2017년

|

||||

|

10월 21일

|

SBS 러브FM |

송은이, 김숙의 언니네 라디오

|

게스트

|

듣기

|

|

2018년

|

||||

|

10월 9일

|

MBC FM4U |

정오의 희망곡 김신영입니다

|

게스트

|

|

|

2020년

|

||||

|

10월 9일

|

SBS 파워FM |

딘딘의 뮤직하이

|

게스트

|

영상

|

5.3. 음반

|

날짜

|

앨범명

|

곡명

|

비고

|

|

2005년

|

|||

|

2월 3일

|

슬픈 연가 OST - 2

OST

|

몇 번을 헤어져도 (건우 Song)

|

|

|

10월 22일

|

연정훈 Vol.1

정규 앨범

|

All for you

|

첫 정규 앨범

|

|

2013년

|

|||

|

11월 27일

|

Yeon Jeong Hoon

싱글 앨범

|

발장난

|

|

5.4. 뮤직비디오

|

날짜

|

아티스트

|

곡명

|

|

2005년

|

||

|

10월 2일

|

연정훈

|

All for you

|

5.5. 광고

|

기업명

|

브랜드명

|

분류

|

공동 출연

|

|

2000년

|

|||

|

SK텔레콤

|

SK텔레콤 엔탑

|

통신사

|

|

|

2003년

|

|||

|

롯데제과

|

롯데제과 위즐

|

아이스크림

|

봉태규

|

|

2004년

|

|||

|

신성통상

|

신성통상 유니온베이

|

의류

|

김성은

|

|

2005년

|

|||

|

KTF

|

KTF

|

무선 인터넷

|

|

|

해태htb

|

야채과일100

|

음료

|

|

|

론 커스텀

|

론 커스텀

|

의류

|

|

|

2009년

|

|||

|

삼성전자

|

삼성전자 하우젠

|

가전제품

|

한가인

|

|

2012년

|

|||

|

기아자동차

|

기아자동차 K3

|

자동차

|

|

|

2013년

|

|||

|

금강제화

|

금강제화

|

패션

|

성유리

|

|

2015년

|

|||

|

(주)트루프렌드 에프앤씨

|

눈꽃빙수 - 꽃빙

|

빙수

|

|

|

2016년

|

|||

|

지유가오카

|

지유가오카

|

제과

|

|

|

2017년

|

|||

|

헨켈홈케어코리아

|

프릴 시크릿 오브 베이킹소다

|

주방세제

|

|

|

Mont Pellier

|

몽펠리에

|

의류

|

|

|

2020년

|

|||

|

포스트

|

콘푸라이트

|

시리얼

|

|

5.6. 홍보대사

|

기업명 / 기관명

|

홍보대사명

|

|

2008년

|

|

|

서울특별시 서대문구

|

명지대학교 개교 60주년 홍보대사

|

|

2012년

|

|

|

서울특별시 중구

|

대한적십자사 헌혈홍보대사

|

|

2015년

|

|

|

중국 관광의 해 홍보대사

|

|

6. 수상 경력

6.1. 시상식

|

날짜

|

시상식명

|

수상 부문

|

작품

|

|

2003년

|

|||

|

12월 31일

|

KBS 연기대상

|

남자 신인상

|

노란 손수건

|

|

2008년

|

|||

|

12월 30일

|

MBC 연기대상

|

PD상

|

에덴의 동쪽

|

|

2013년

|

|||

|

11월 16일

|

제2회 에이판 스타 어워즈

|

베스트 드레서상

|

금 나와라, 뚝딱!

|

|

12월 30일

|

MBC 연기대상

|

연속극부문 남자 우수 연기상

|

|

|

2014년

|

|||

|

4월 19일

|

페라리 챌린지 레이스

|

아시아태평양지역 4라운드 우승

|

|

|

2015년

|

|||

|

12월 10일

|

제28회 그리메상

|

최우수 남자 연기자상

|

가면

|

|

2018년

|

|||

|

12월 30일

|

MBC 연기대상

|

연속극부문 남자 최우수 연기상

|

내사랑 치유기

|

|

2020년

|

|||

|

12월 24일

|

KBS 연예대상

|

쇼·버라이어티 부문 베스트 엔터테이너상

|

1박 2일

|

|

2021년

|

|||

|

12월 25일

|

KBS 연예대상

|

쇼·버라이어티 부문 우수상

|

1박 2일

|

7. 여담

- 2021년 6월 13일, 1박 2일에서 명지대학교 재학 시절 응원단장 출신임을 밝혔다.

- 아내 한가인과의 열애와 결혼으로 국민 도둑놈 소리를 들었지만, 연애 결혼뿐만 아니라 로맨스극에서 상대역 운도 국민 도둑놈인 것으로 유명하다. 아내 한가인의 대한민국 대표 미녀 타이틀의 라이벌인 김태희와도 흥부네 박 터졌네에서 부부 역할로 나왔으며, 이후 원조 국민 여동생 장나라와도 사랑을 할꺼야에서 서로의 첫사랑이자 마지막 쯤에 결혼해서 부부가 되는 사이로 나왔다. 정작 부인 한가인과는 노란 손수건에서 신분의 차이 때문에 헤어져야 했고 연정훈은 이유리와 결혼했다. 그리고 마침내 현실에서 한가인과 결혼 했으니 그야말로 국민 도둑놈. 정리하자면 결혼 전까지 극중 연애 결혼한 여배우가 김태희, 장나라, 이유리다.

- 송승헌과도 기묘한 인연이 있는데, 송승헌이 슬픈 연가의 드라마에서 본 촬영 전 티저 촬영에 주제곡 뮤직비디오 촬영까지 다 한 상태에서 병역비리로 군대에 가면서, 연정훈이 그 역할을 하게 되었다. 어찌보면 선배의 자리를 꿰차게 된 것이다. 드라마는 원래 기대만큼까지는 안 갔어도 급히 투입된 연정훈의 괜찮은 연기로 나쁘지 않게 끝났다. 이후 연정훈도 군복무를 했다. 그런데 문제는 제대 후 오히려 불편할 수 있었던 송승헌과 함께 에덴의 동쪽 드라마로 친형제 역할로 복귀작을 찍게 된 것이다. 그러나 송승헌은 당시 오히려 자신이 만든 민폐를 해결해 준 연정훈을 고마워했다고 한다. 이 작품에서도 두 사람은 좋은 연기를 보여주었고, 송승헌은 인생 최초의 2008년 MBC 연기대상을 수상했다. 어떻게 보면 악연이 될 수도 있는 상황을 최고로 좋게 풀어간 것. 이로써 연정훈은 권상우, 송승헌 모두와 투톱 남자 주연을 해본 최초의 배우가 되었고, 두 작품 다 공교롭게도 진짜 사랑의 방해꾼 역할을 맡게 되었다.

- 2009년 소녀시대 멤버 윤아가 '신동엽, 신봉선의 샴페인'의 간판 코너인 ‘이상형 월드컵’을 통해서 이상형이 연정훈이라고 밝힌 적이 있다. 윤아는 연정훈 뿐만 아니라 한가인도 매우 좋아한다고 말했다. 1 2

- 1박 2일 시즌4에 합류했단 것이 알려지고 가장 의외라는 평을 들었지만, 막상 방영이 시작하고 나서부터는 의외로 다른 멤버들과 케미가 좋고 캐릭터 이미지 어르신, 열정훈 도 잘 먹혀서 잘 어울린다는 호평을 듣고 있다. 아내인 한가인 또한 연정훈이 배우 연정훈이 아니라 평소 연정훈의 모습을 보고 예능이 어울릴 것이라고 했었다고 하고, 본인도 예능을 하고 싶다는 의지가 있었다고 한다.

- 1박 2일에서 열정훈이라는 별명이 붙여질 정도로 가장 의욕이 넘친다. 워낙에 지는 걸 싫어하는 성격이라 한 번 스위치가 들어가면 정말 멈출 줄을 모르는 모습을 보인다. 그런 열정 덕분인지 멤버들 중에서 가장 게임 성적이 좋은 편이다.

- 2022년 4월 10일 1박 2일에서 한가인과 처음 사귄 날짜는 2003년 5월 13일이라고 밝혔다. 이 시기라면 둘이 같이 출연한 노란손수건이 초반쯤이다. 드라마 첫방송이 2월 3일이다.

반응형

'INFO' 카테고리의 다른 글

| 함소원 (진화/어쩔거니/리즈/조작/논란/둘째/근황/재산/레슬링) (0) | 2022.05.22 |

|---|---|

| 음바페 (잔류/주급/재계약/나이/마크롱/등번호/키/축구화/레알 이적) (0) | 2022.05.22 |

| 마시호 (키/나이/프로필/이력/과거/사주/입장/전자카드) (0) | 2022.05.22 |

| 이도진 (나이/김준수/김숙/에어드랍/오케이/metaverse/노래/혈액형/복면가왕) (0) | 2022.05.22 |

| CLC(씨엘씨) (프로필/유진/해체/예은/승희/승연/CLC) (0) | 2022.05.21 |

댓글